Science · Code · Curiosity

Hello World

Introduction the world we want our language model to describe.

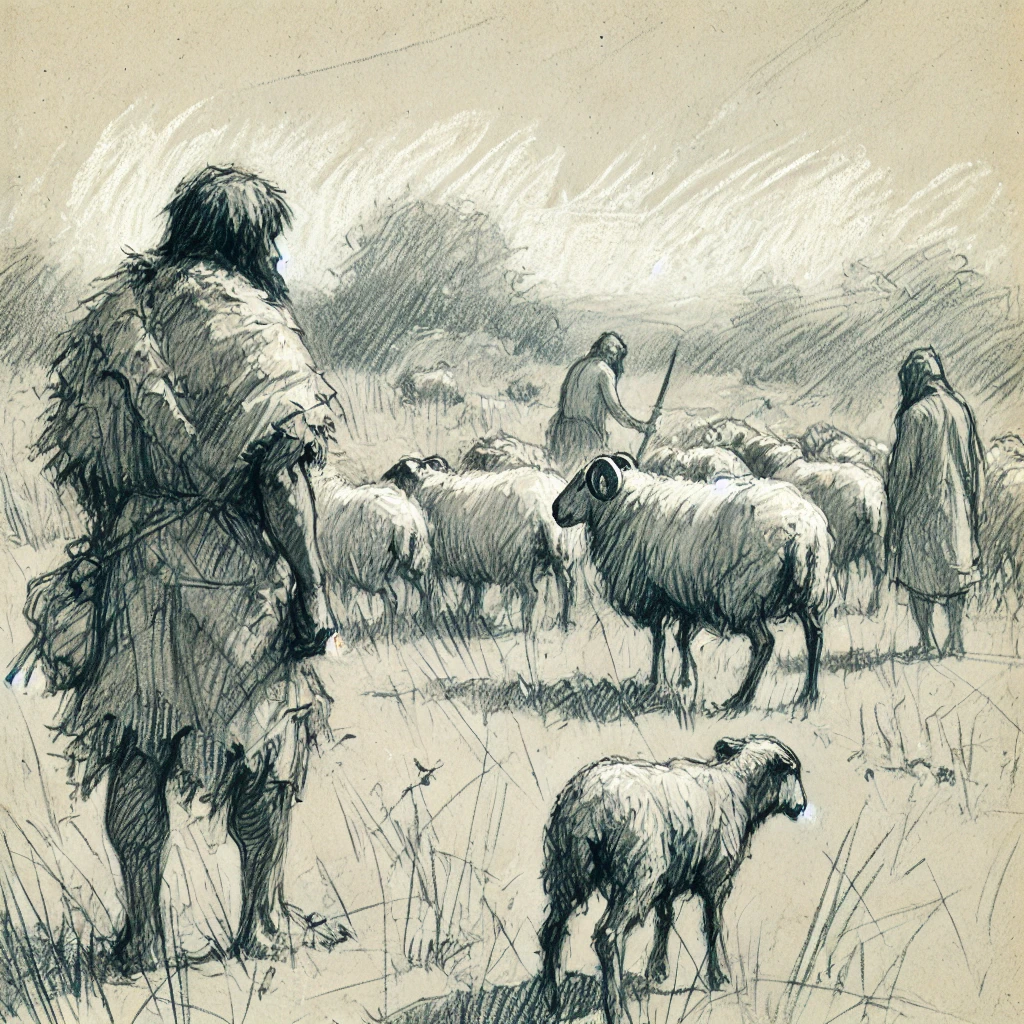

Introduction

For this exploration of language models, I'm going to imagine there's a group of primitive cave people trying to describe their world. We will create a simple language they will use to describe their world, and at the same time develop and AI that learns this language. The language they use will be a stripped down version of English, that has a very limited vocabulary and simple grammar.

Some simple sentences about sheep

In the beginning, our cave people look out at the world and see some sheep. They describe them with two sentences. These are the only possible sentences they can say.

Sheep are herbivores

- Some cave people

Sheep are slow

Tokenisation

In order to create a language model that can generate sentences, we create a neural network that learns to predict the next word in a sentence. The first step is to split the sentences into tokens. For this simple model, a token is just a word. I've also added a special token to indicate the start or end of a sentence, which will be useful later. I'm using <BR> for this token. It doesn't matter what the token is as long as it doesn't appear in a normal sentence.

If we split our two sentences into tokens we get:

- <BR>, sheep, are, herbivores, <BR>

- <BR>, sheep, are, slow, <BR>

Neural network

Now we'll create a neural network that takes in one word (token) as an input, and returns its prediction of what word should follow it. The network has one input node for every possible token and one output node for every possible token. To start with, we'll create the simplest model which is where all the inputs connected directly to all the outputs.

Initially, the weight of each connection is random. We then feed in examples of tokens from our two sentences and see how closely the result matches our expectation e.g. given "sheep", we expect the output "are". The weights are then updated using back-propagation. There are many good explanations for how this works online, such as 3Blue1Brown's Neural Networks series. I'm using pytorch which handles the learning algorithm.

Results

After 10 000 iterations, we can look at the new weights of the network. If we just show connections with a positive weight, the network looks like this.

With so few tokens and connections, it's relatively easy to verify that the network has learnt our sentences. We can also start with the token <BR>, see what token the network predicts next, then feed that token back into the network and keep going until we hit another <BR> token.

We can use the transitions from input to output to generate a Markov chain which clearly shows the two sentences we can generate.

Conclusion

We created a simple neural network model that consisted of a single matrix. It was able to learn how to generate two simple sentences about sheep. In the next article, we'll look at how to represent words with vectors.

Leave a comment

Comments are moderated and will appear after approval.